PyTorch Tutorial 13 - Feed-Forward Ne...

教程Python代码如下:

# MNIST

# DataLoader, Transformation

# Multilayer Neural Net, activation function

# Loss and Optimizer

# Training loop (batch training, 批处理训练)

# Model evaluation

# GPU support

"""进行数字分类的多层神经网络,基于著名的MNIST数据集"""

import torch

import torch.nn as nn

import torchvision

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

# Device configuration

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# hyper parameters, 超参数

input_size = 784 # 28*28, 图像的大小是28*28,拉伸至一维是784

hidden_size = 100

num_classes = 10 # 0-9共10个数字

num_epochs = 2

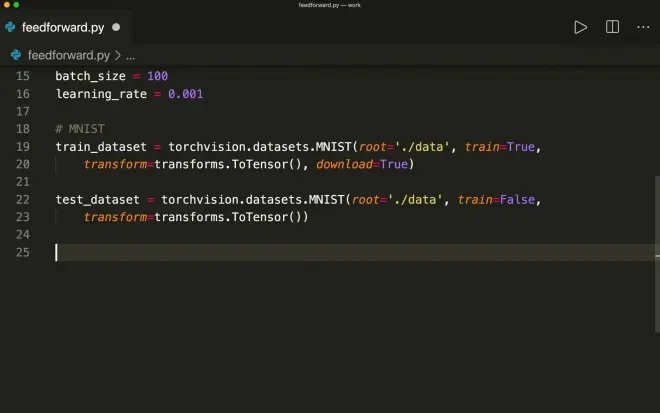

batch_size = 100

learning_rate = 0.001 # 学习速率

#MNIST

train_datasets = torchvision.datasets.MNIST(root='./Data', train=True, transform=transforms.ToTensor(), download=True)

test_datasets = torchvision.datasets.MNIST(root='./Data', train=False, transform=transforms.ToTensor())

train_loader = torch.utils.data.DataLoader(dataset=train_datasets, batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_datasets, batch_size=batch_size, shuffle=False)

"""examples = iter(train_loader)

samples, labels = examples.next()"""

examples = iter(test_loader)

samples, labels = next(examples)

print(samples.shape, labels.shape)

for i in range(6):

plt.subplot(2, 3, i+1)

plt.imshow(samples[i][0], cmap='gray')

#plt.show()

# Fully connected neural network with one hidden layer

class NeuralNet(nn.Module):

def __init__(self, input_size, hidden_size, num_classes):

super(NeuralNet, self).__init__()

self.input_size = input_size

self.l1 = nn.Linear(input_size, hidden_size)

self.relu = nn.ReLU()

self.l2 = nn.Linear(hidden_size, num_classes)

def forward(self, x):

out = self.l1(x)

out = self.relu(out)

out = self.l2(out)

# no activation and no softmax at the end

return out

model = NeuralNet(input_size, hidden_size, num_classes).to(device)

# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)

# Train the model

n_total_steps = len(train_loader)

for epoch in range(num_epochs):

for i, (images, labels) in enumerate(train_loader):

# origin shape: [100, 1, 28, 28]

# resized: [100, 784]

images = images.reshape(-1, 28 * 28).to(device)

labels = labels.to(device)

# Forward pass

outputs = model(images)

loss = criterion(outputs, labels)

# Backward and optimize

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (i + 1) % 100 == 0:

print(f'Epoch [{epoch + 1}/{num_epochs}], Step [{i + 1}/{n_total_steps}], Loss: {loss.item():.4f}')

# Test the model

# In test phase, we don't need to compute gradients (for memory efficiency)

with torch.no_grad():

n_correct = 0

n_samples = 0

for images, labels in test_loader:

images = images.reshape(-1, 28 * 28).to(device)

labels = labels.to(device)

outputs = model(images)

# max returns (value ,index)

_, predicted = torch.max(outputs.data, 1)

n_samples += labels.size(0)

n_correct += (predicted == labels).sum().item()

acc = 100.0 * n_correct / n_samples

print(f'Accuracy of the network on the 10000 test images: {acc} %')